Financial advisors make us dumb? Not so fast...

July 29, 2009

Full disclosure: I work for a firm that provides financial advice. I wouldn’t do it if I didn’t think we were helping our clients, but I still cannot pretend to be an impartial party. But, I hope this also makes my opinion a little more informed.

A recent paper on how we use expert financial advice has been grabbing a bit of attention lately – Dan Ariely called the results “troublesome, perhaps even frightening” . In a Wired article title “Given ‘Expert’ Advice, Brains Shut Down” the paper’s author states:

When the expert’s advice made the least sense, that’s where we could see the behavioral effect… In this world, you take advice, integrate it with your own information, and come to a decision. If that were true, we’d have seen activity in regions that track decisions. But what we found is that when someone receives advice, those relationships went away.

Yikes! We stop thinking when someone gives us advice! I need to read the article. So I did, and you can too (bless PLoS). Reaction? Don’t believe the hype. What follows is about the choices people made. Criticism about neuroimaging analysis are beyond my skillset.

- The “expert advice” was a single word – “Accept” or “Reject”, detailing what “the expert would do”. The expert was an economist who explicitly made conservative recommendations¹.

- Given that you tell me someone is an expert and their opinion I’d believe you too - and that would affect my behaviour. Questioning the expertise of someone is almost secondary to how you integrate definitely expert advice into your decisions.

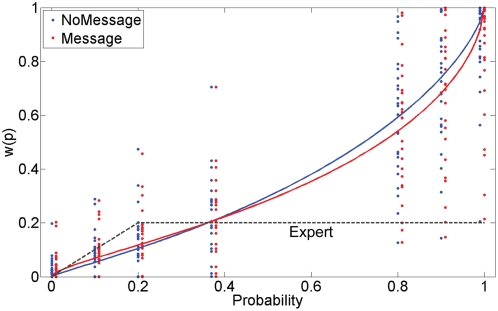

- The graph below depicts the two estimated probability weighting functions with/without expert advice. There is a statistically significant difference between them – we can reliably tell one from the other. But the change appears pretty small the maximum difference in the function (at an objective probability of 0.8) appears to be 0.05, or a maximum effect of 5%.

Quoting the paper –“the expert’s advice led to a significant change … in the direction of the expert’s advice.” So the respondents listened to the expert a bit, but didn’t do anything really stupid. Ok, is that supposed to be surprising or interesting?

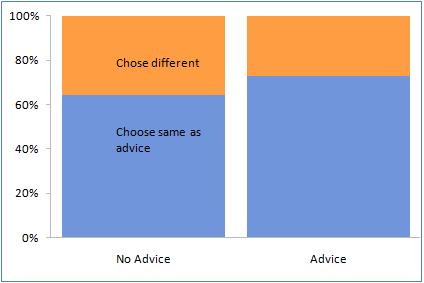

Quoting the paper –“the expert’s advice led to a significant change … in the direction of the expert’s advice.” So the respondents listened to the expert a bit, but didn’t do anything really stupid. Ok, is that supposed to be surprising or interesting? - The effect on choices was as follows - when not told what the expert would do, 64% did what he would have recommended. When told his recommendation, 72% did what he recommended. That’s right - a difference of 8%, when the baseline agreement was 64%.

Thoughts…

These aren’t as impressive results as thought they’d be. The comparison of the “harm” the advice did was benchmarking responses to expected utility theory, so it’s questionable if subjects were “harmed” by it. And the “expert” explicitly states he’s giving conservative advice. This is interesting because in actual financial advisory settings, you are never sued for advising taking on too little risk. To my knowledge every article you will read is about financial advisors advising too much risk. This article actually defines harm by not taking on enough risk, in fact! I do think there is a great study in this ala Stanford prison experiment - how much will we follows experts advice, including when it’s obviously harmful or wrong? But this experiment doesn’t really ask those questions. Experts provide advice about things we supposedly know less than them about, so that we don’t have to know everything they know to come to as-informed a conclusion. While I do think everyone should assess expertise critically², I think this paper should have been titled “Expert advice influences choices and decreases cognitive load” - which is pretty much what we go to experts for.

1. The quote I found was:

Though the recommendations were delivered under his imprimatur, Noussair himself wouldn’t necessarily follow it. The advice was extremely conservative, often urging students to accept tiny guaranteed payouts rather than playing a lottery with great odds and a high payout.

2. And is exactly why I read the articles myself.